What is Nebula Exchange¶

Nebula Exchange (Exchange) is an Apache Spark™ application for bulk migration of cluster data to NebulaGraph in a distributed environment, supporting batch and streaming data migration in a variety of formats.

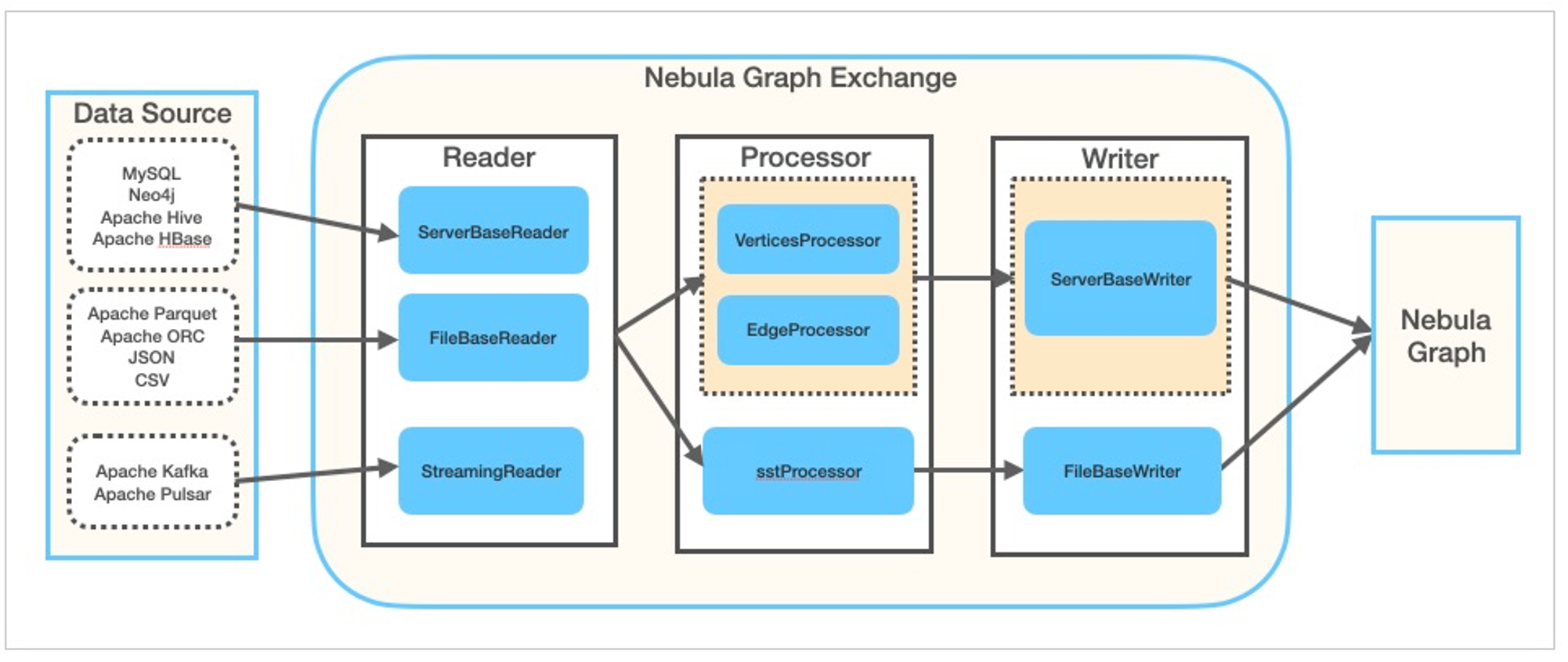

Exchange consists of Reader, Processor, and Writer. After Reader reads data from different sources and returns a DataFrame, the Processor iterates through each row of the DataFrame and obtains the corresponding value based on the mapping between fields in the configuration file. After iterating through the number of rows in the specified batch, Writer writes the captured data to the NebulaGraph at once. The following figure illustrates the process by which Exchange completes the data conversion and migration.

Editions¶

Exchange has two editions, the Community Edition and the Enterprise Edition. The Community Edition is open source developed on GitHub. The Enterprise Edition supports not only the functions of the Community Edition but also adds additional features. For details, see Comparisons.

Scenarios¶

Exchange applies to the following scenarios:

- Streaming data from Kafka and Pulsar platforms, such as log files, online shopping data, activities of game players, information on social websites, financial transactions or geospatial services, and telemetry data from connected devices or instruments in the data center, are required to be converted into the vertex or edge data of the property graph and import them into the NebulaGraph database.

- Batch data, such as data from a time period, needs to be read from a relational database (such as MySQL) or a distributed file system (such as HDFS), converted into vertex or edge data for a property graph, and imported into the NebulaGraph database.

- A large volume of data needs to be generated into SST files that NebulaGraph can recognize and then imported into the NebulaGraph database.

-

The data saved in NebulaGraph needs to be exported.

Enterpriseonly

Exporting the data saved in NebulaGraph is supported by Exchange Enterprise Edition only.

Advantages¶

Exchange has the following advantages:

- High adaptability: It supports importing data into the NebulaGraph database in a variety of formats or from a variety of sources, making it easy to migrate data.

- SST import: It supports converting data from different sources into SST files for data import.

- SSL encryption: It supports establishing the SSL encryption between Exchange and NebulaGraph to ensure data security.

-

Resumable data import: It supports resumable data import to save time and improve data import efficiency.

Note

Resumable data import is currently supported when migrating Neo4j data only.

- Asynchronous operation: An insert statement is generated in the source data and sent to the Graph service. Then the insert operation is performed.

- Great flexibility: It supports importing multiple Tags and Edge types at the same time. Different Tags and Edge types can be from different data sources or in different formats.

- Statistics: It uses the accumulator in Apache Spark™ to count the number of successful and failed insert operations.

- Easy to use: It adopts the Human-Optimized Config Object Notation (HOCON) configuration file format and has an object-oriented style, which is easy to understand and operate.

Data source¶

Exchange 3.0.0 supports converting data from the following formats or sources into vertexes and edges that NebulaGraph can recognize, and then importing them into NebulaGraph in the form of nGQL statements:

- Data stored in HDFS or locally:

-

Data repository:

- Graph database: Neo4j (Client version 2.4.5-M1)

- Relational database:

- Column database: ClickHouse

- Stream processing software platform: Apache Kafka®

- Publish/Subscribe messaging platform: Apache Pulsar 2.4.5

In addition to importing data as nGQL statements, Exchange supports generating SST files for data sources and then importing SST files via Console.

In addition, Exchange Enterprise Edition also supports exporting data to a CSV file using NebulaGraph as data sources.